Lecture 01:The Geometry of Linear Equations

The fundamental problem of linear algebra is to solve n linear equations in n unknowns, for example:

In the first lecture, Dr. Strang show us three ways to view this problem.

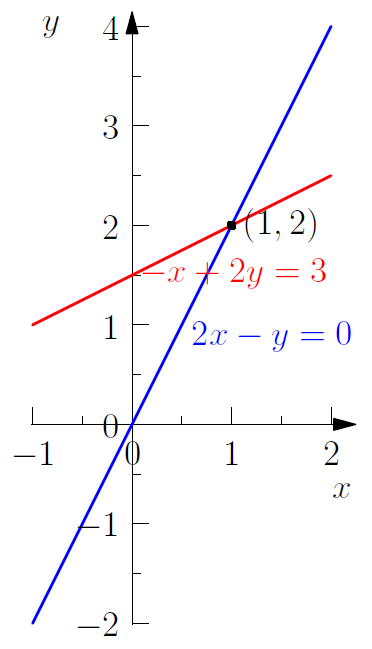

Row Picture

Plot the points that satisfied each equation. The intersection of the plots (if they do intersect) is the solution of the system of above equations, which is .

We substitute (1,2) into the original system of equations to check it’s validity:

Similarly, the solution of a three dimensional system is the common intersection of those three planes (if there does exist one).

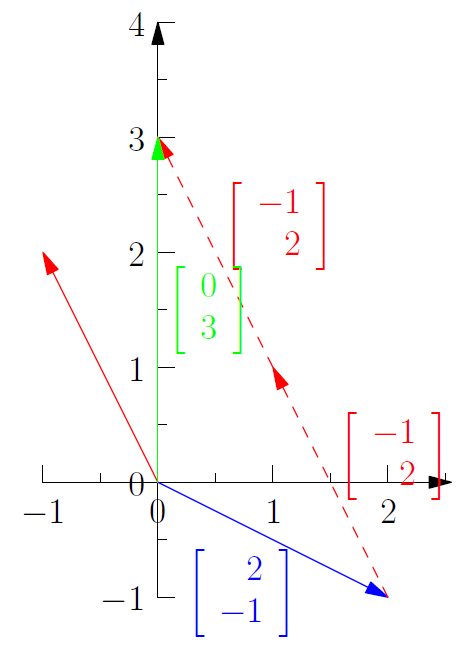

Column Picture

In the column picture, we rewrite the system as a single equation by turning the coefficients in the column of the system into vectors:

Given two vectors c and d and scalars x and y, the sum xc+yd is called a linear combination of c and d, which is an important concept throughout Linear Algebra.

Geometrically, we are looking for a pair of x and y which satisfies that x copies of vector added to y copies of vector equals the vector . As shown in Figure 2, agreeing with the result we got from row picture.

In the three dimensions, the column picture requires to find a linear combination of 3-dimensional vectors that equals to the vector b.

Matrix Picture

Rewrite the system of equations as a single equation by using matrices and vectors:

The matrix is called the coefficient matrix. The vector is the vector of unknowns. The value on the right hand side of the equations form the vector b:

The three dimensional matrix picture is similar to the two dimensional one, except that the vectors and matrices increase in size.

Matrix Multiplication

How do we multiply a matrix A by a vector x?

The method Dr. Strang suggests is to think of the entries of x as the coefficients of a linear combination of the column vectors of the matrix:

The technique shows that Ax is a linear combination of the columns of A.

Also, you can calculate the product Ax by taking dot product of each row of A with the vector x:

Linear Independence

In the column and matrix pictures, the right hand side of the equation is a vector b. Given a matrix A, if we can solve:

for every possible b, we say that A is an inversible matrix, which means that the linear combinations of the column vectors fill the xy-plane (in two dimensional case). Otherwise, we say that A is a singular matrix, whose column vectors are linear dependent, or in other words, all linear combinations of those vectors lie on a point or line (in two dimensional case). In such case, the combinations don’t fill the whole space.